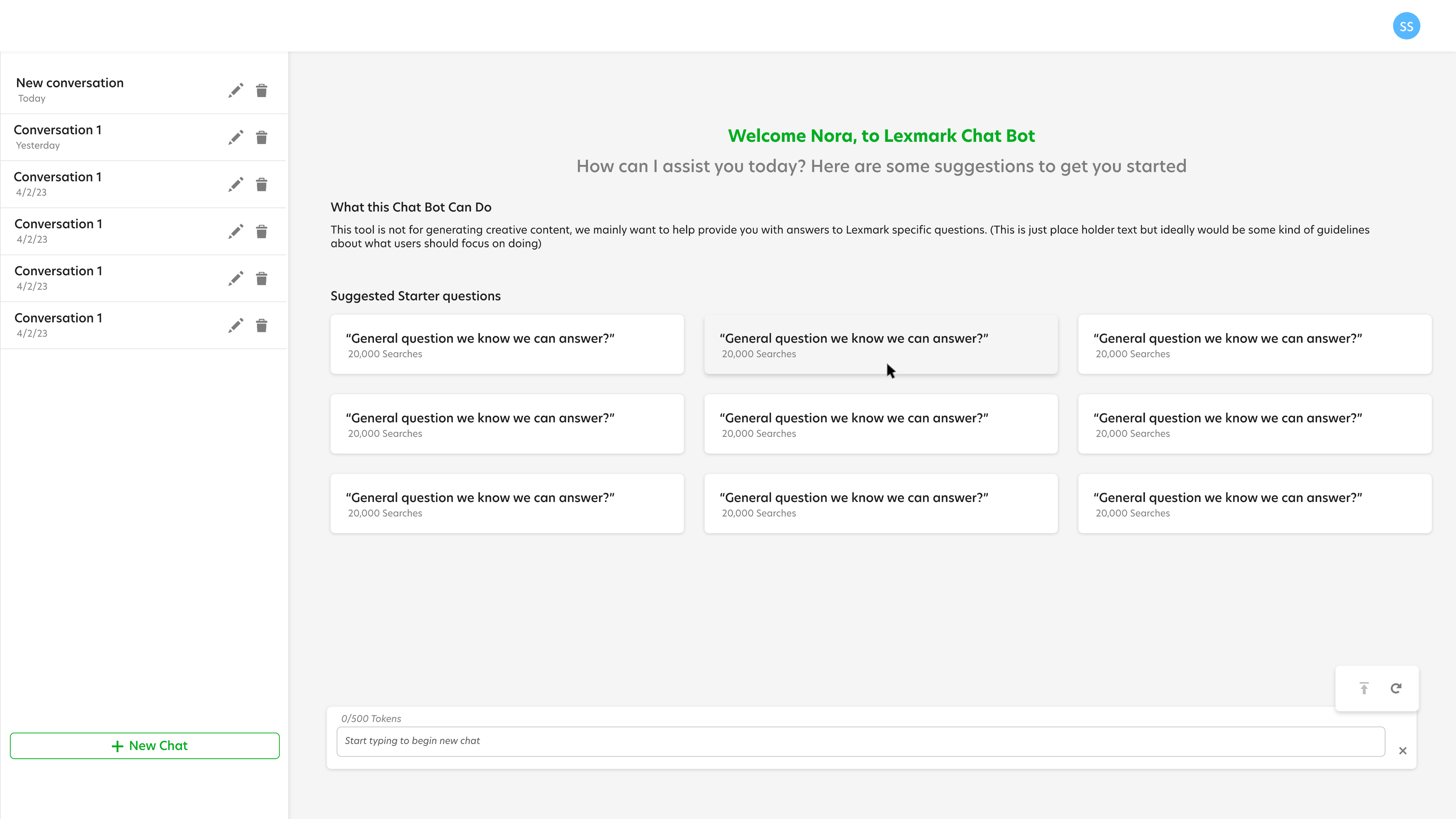

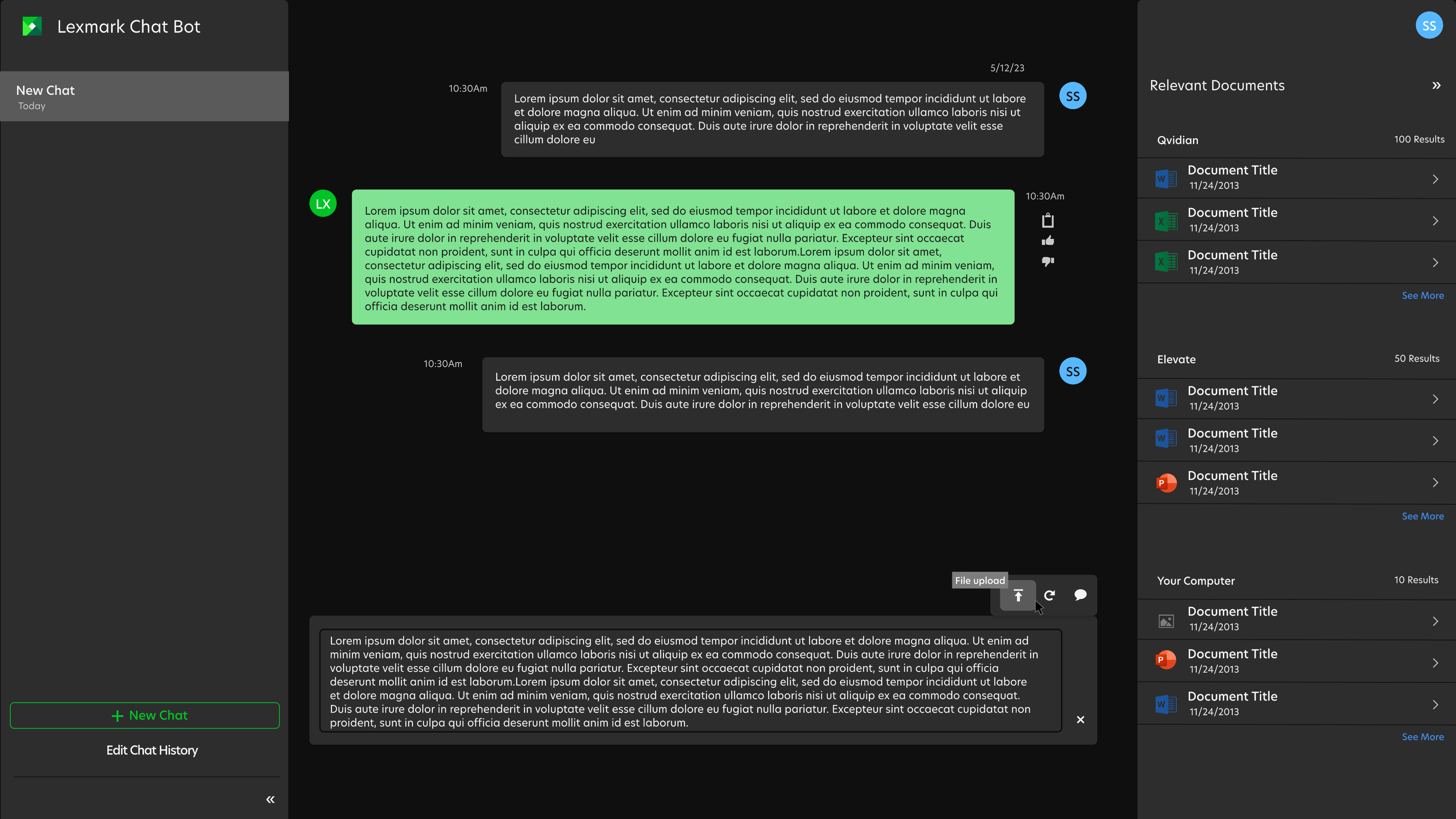

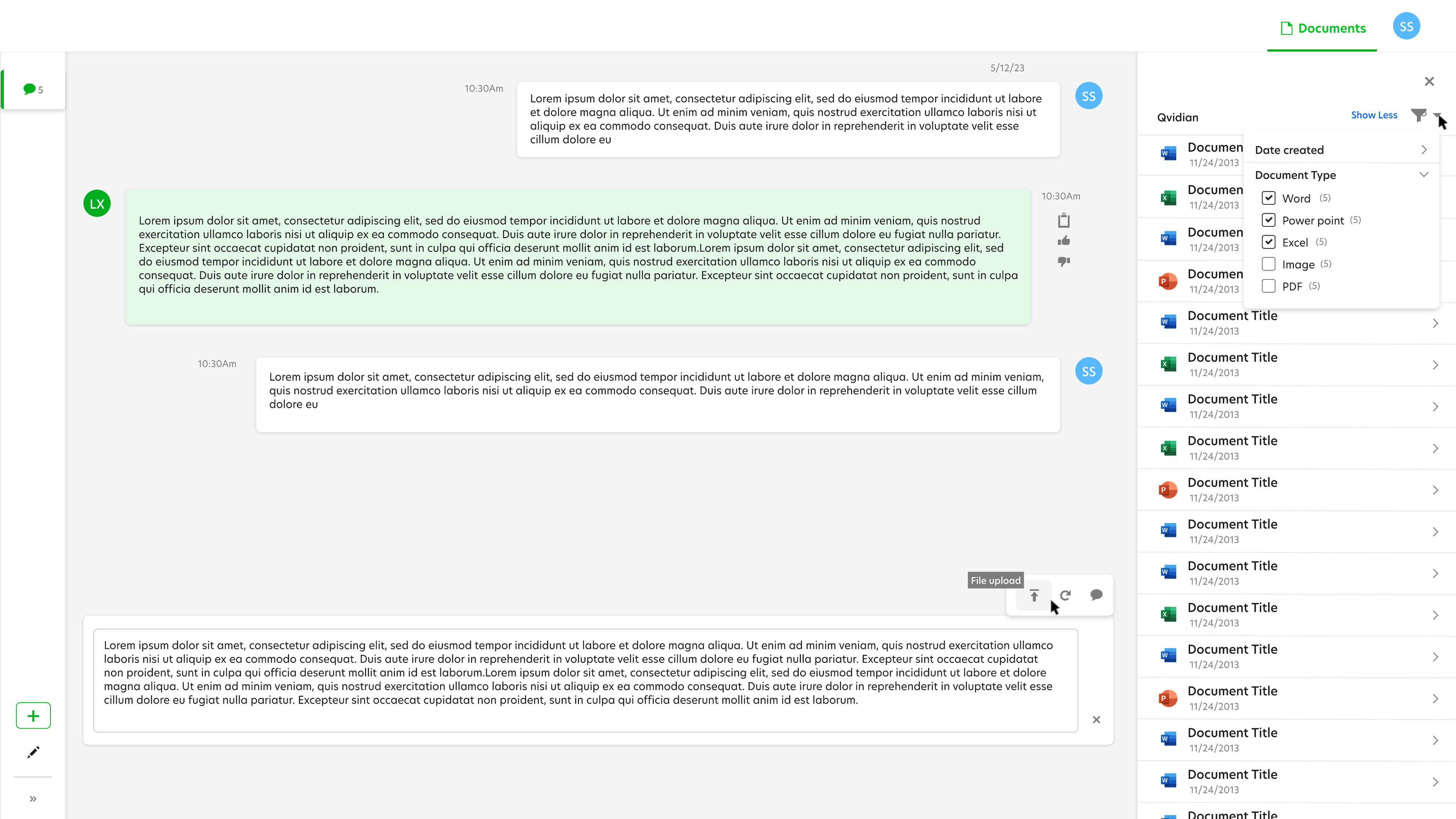

On the surface, using a Large Language Model (LLM) to sort through bulky internal documents is a "slam dunk" use case. But after months of the tool being live, the silence from the user base was deafening.

The AI team saw a technical success; I saw a human disconnect. I needed to find out if the problem was the model's performance, the users' comfort with AI, or a fundamental UI failure.